Multicast Routing in AWS

Table of Contents

Consider for a moment that you have an application running on a server that needs to push some data out to multiple consumers and that every consumer needs the same copy of the data at the same time. The canonical example is live video. Live audio and stock market data are also common examples. At the re:Invent conference in 2019, AWS announced support for multicast routing in AWS Virtual Private Cloud (VPC). This blog post will provide a walkthrough of configuring and verifying multicast routing in a VPC.

The Components of Multicast⌗

Just so we're on the same page as far as terminology, here are the (non-AWS specific) terms used when talking about multicast:

- Sender

- The node(s) that are sourcing traffic out towards receivers. Importantly, senders don't actually know if any receivers exist, they just blindly send. It's up to the network to know if there are receivers and to get the sender's traffic to the receivers.

- Receiver (also known as a "member" or "group member")

- The node(s) that want to receive multicast traffic. The network needs to be informed of a node's intention to receive traffic either manually or via some sort of signalling from the node.

- Group

- Refers to the collection of sender(s) and receiver(s) that are all sending/listening to the same multicast IP address. Usually though, when someone says "group", they're just talking about the multicast IP address.

- Multicast IP address

- An IP address in the range

224.0.0.0to239.255.255.255. Note that multicast addresses don't have subnet masks nor are there broadcast or network addresses. Addresses in the239.x.x.xrange are private and available for use by organizations for their own purposes. - Multicast router

- A router that has been specifically configured to route multicast traffic. It may also run and participate in multicast control plane signalling.

The multicast router warrants a deeper explanation. Routers by default do not forward multicast traffic. This is for a few reasons: 1/ most networks don't need or use routed multicast, 2/ routing multicast requires pre-planning and a certain level of bootstrapping for things to work properly, and 3/ multicast routing requires additional control plane protocols that unicast routing does not use. For multicast routing to work, a network engineer needs to take a number of explicit actions in the network. Bear these facts in mind as you read on because it will help explain why some of the steps described below are necessary in AWS.

Some Notes for the Network Engineers⌗

If you're a network engineer and understand multicast, these next few lines will likely save you some head scratching. Multicast routing in an AWS VPC...

- Does not use PIM

- Relieves you of the need to decide between sparse, dense, or bidir

- Relieves you of the need to create, manage, and maintain a rendezvous point

- Does not have a reverse path forwarding check

A VPC is a true software-defined network. It knows where every host and IP address sits, there are no logical loops, and perhaps most importantly, there is no Layer 2 to contend with. AWS handles these multicast functions that would otherwise have to be managed by an engineer in an on-prem network.

Update Dec 19, 2020: Since writing this post, support for signalling group membership using IGMP has launched. See the AWS blog for details. This post still shows the static group membership method.

Configuring VPC Multicast Routing⌗

The basis for multicast routing in a VPC is built on top of Transit Gateway (TGW). Transit Gateway fulfills the function of the multicast router.

Even if the sender and all of the receivers are in the same VPC, even if they're in the same subnet in the VPC, there is still a need for a multicast-enabled TGW and a multicast domain as described below.

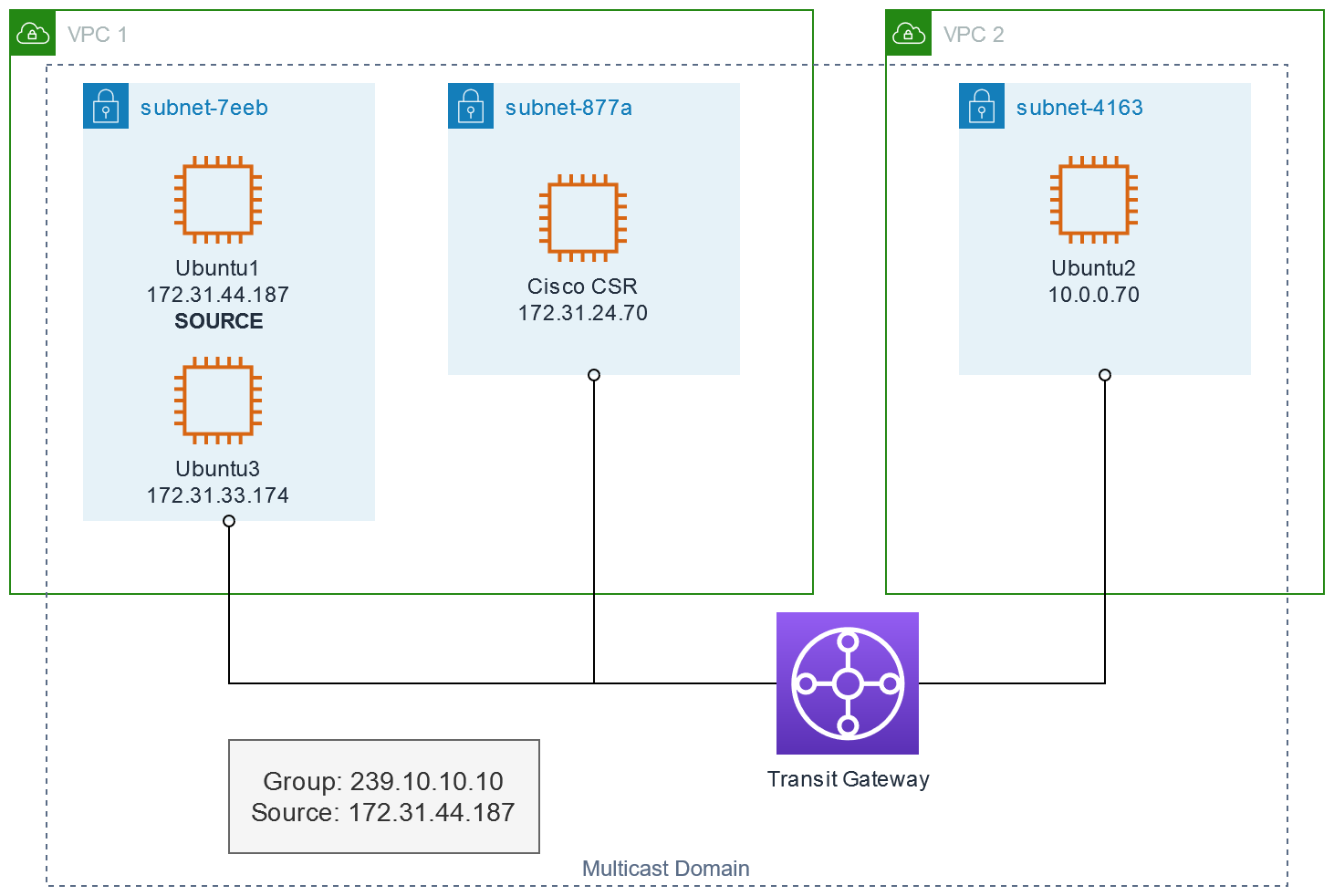

This is the topology I'm going to configure:

- One source, Ubuntu1 @ 172.31.44.187, sending to the group 239.10.10.10

- Three receivers:

- Ubuntu3, in the same subnet as the source

- Cisco CSR, in the same VPC as the source but a different subnet

- Ubuntu2, in a different VPC from the source

Configure the Receivers⌗

First, just to baseline that multicast does not function, here's how I've joined the receivers to the group. The Cisco CSR is the easiest:

CSR(config-if)#ip igmp join 239.10.10.10

CSR(config-if)#^Z

CSR#show ip igmp int g1

GigabitEthernet1 is up, line protocol is up

Internet address is 172.31.24.70/20

IGMP is enabled on interface

[...]

Multicast groups joined by this system (number of users):

239.10.10.10(1)

On the Linux instances, I used a piece of software called Static Multicast Routing Daemon (smcroute, for short).

The reason I have to statically join the group is because I'm not actually running a multicast-enabled application here; as you'll see below, I'm just using ping to generate multicast traffic. Since I'm not running an application on the receivers that will join the group, I need to artificially cause the receivers to join.

On the Ubuntu receivers, I've installed smcroute, launched it, and issued smcroute -j to have it join the group:

sudo apt-get update

sudo apt-get install smcroute

sudo smcroute -d

sudo smcroute -j ens5 239.10.10.10

Since I'll be testing with ICMP pings, I also need to tweak a sysctl to tell the kernel to respond to ICMP echo requests received via multicast:

sudo sysctl net.ipv4.icmp_echo_ignore_broadcasts=0

(I know the sysctl says broadcasts but trust me, it affects multicast ICMP echos as well)

Verify the group has been joined:

ubuntu@ubuntu3:~$ ip maddr show dev ens5

2: ens5

[...]

inet 239.10.10.10

[...]

Configure the Source⌗

On Ubuntu1, the source, no special configuration is needed; it can just start sending to the group.

ubuntu@ubuntu1:~$ ping -c3 239.10.10.10

PING 239.10.10.10 (239.10.10.10) 56(84) bytes of data.

--- 239.10.10.10 ping statistics ---

3 packets transmitted, 0 received, 100% packet loss, time 2051ms

As expected, there are no replies, not even from Ubuntu3 which is sitting in the same subnet.

Build the Transit Gateway and Multicast Domain⌗

First step: create a multicast-enabled TGW.

Enabling a TGW for multicast forwarding can only be done at the time a TGW is created; you cannot modify an existing TGW to enable multicast. For this reason, you have to create a brand new TGW when configuring multicast. However, that TGW can then be used for multicast and unicast forwarding.

Check the Transit Gateway FAQ for a list of AWS Regions where multicast is supported.

- I'm going to do all the configuration via the AWS Console. I'm logged in as a user that has the

AdministratorAccesspolicy so I can create VPC and Transit Gateway resources. - I navigate to VPC > Transit Gateways and select Create Transit Gateway.

- I'm not going to cover all of the TGW options in this post; please refer to the TGW documentation for a description of what each option is for.

- Check the option Multicast support.

- Create the TGW.

After the TGW reaches the available state, I can attach the TGW to the two VPCs.

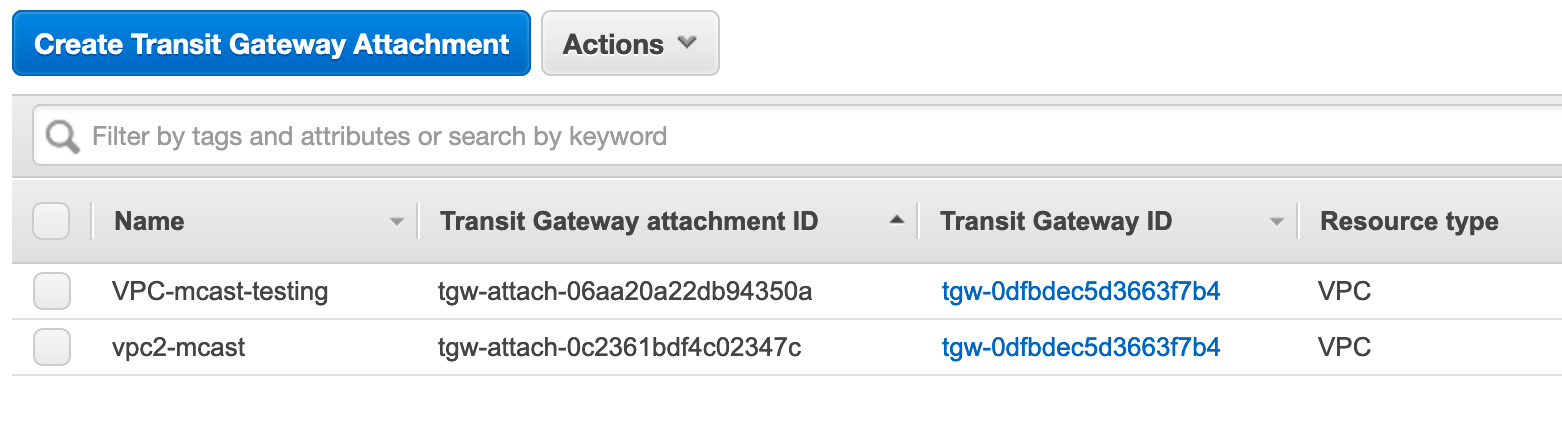

- Still in the VPC Console, I click to Transit Gateway Attachments > Create Transit Gateway Attachment.

- I select the TGW I just created in the Transit Gateway ID dropdown.

- Select VPC as the attachment type.

- Select the VPC ID of the correct VPC in the dropdown.

- Select Create attachment.

- I repeat this for the second VPC.

Next I have to create a multicast domain.

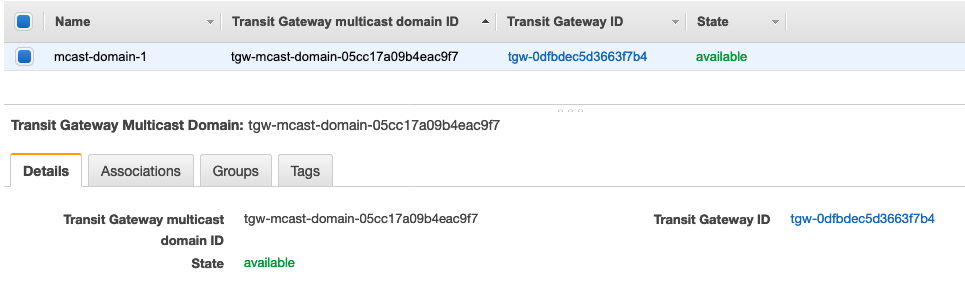

- In the VPC console, I click Transit Gateway Multicast > Create Transit Gateway Multicast Domain.

- I fill in the form with the most important input being the Transit Gateway ID; I select the ID that belongs to the multicast-enabled TGW I just created.

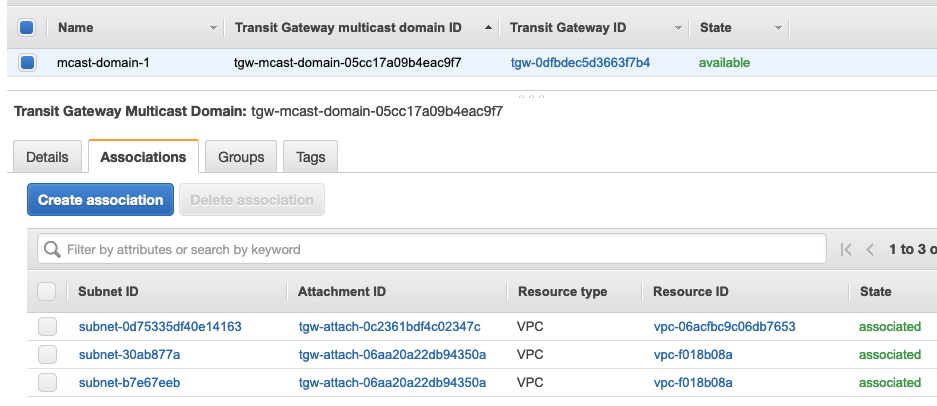

Now I need to associate each subnet where the sender and receivers reside with the multicast domain. This is what will allow sending/receiving multicast traffic in the subnets.

- While looking at the multicast domain, I click the Associations tab and Create association.

- I choose the TGW attachment to associate to the domain and then select the subnet(s) on that attachment that have a sender or receivers.

- I repeat these steps for each association & subnet(s).

Once finished, I have 3 associations representing the 3 subnets in my topology.

The foundational elements are now built: I have a source, receivers, a multicast router, and associated the right subnets with the multicast domain. The next steps will program the multicast domain so it knows who the source and receivers are so that traffic can start to flow between them.

You may have noticed I keep referring to "sender" or "source" in the singular form. That's deliberate. You can only have 1 sender per group. Attempting to add an additional sender for the same group will trigger an error.

Programming the Multicast Forwarding Table⌗

The multicast forwarding table informs the network who the sender is and who the receiver(s) are for a given group. By knowing the {sender, receivers, group IP address} tuple, the network is able to forward multicast traffic.

Multicast forwarding is not configured in the regular VPC routing table(s). There is no need to configure any multicast routes in those tables.

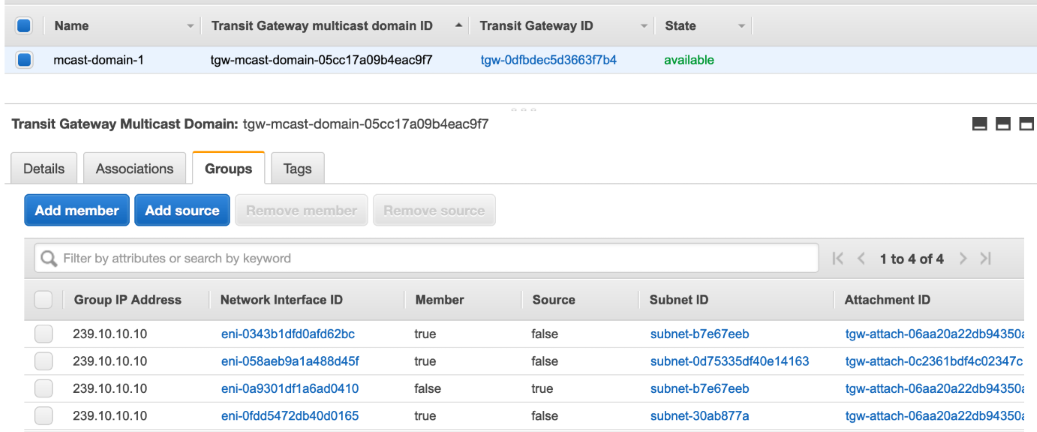

While still looking at the multicast domain, I click the Add source button.

- In the Group IP Address box, type

239.10.10.10 - Check the network interface(s) that are attached to the sender compute instance

- Click Add sources

When picking the interface of the sender, the AWS Console doesn't show the IP address of the interface. I have to identify the sender by either its instance ID or the network interface ID. I used the Amazon EC2 console to look at my sender instance and find both of these items. The same applies for the receivers.

Back on the multicast domain page, I click Add member and I repeat the steps above for each of the receivers.

When I'm finished I have a list of 4 network interfaces on the Groups tab: 1 for the sender and 3 for the receivers.

It takes time for the TGW to have its forwarding tables actually update with what you've configured in the console. Have some patience when you're making source/member changes to see those changes actually take effect in the data plane.

Configure Security Groups⌗

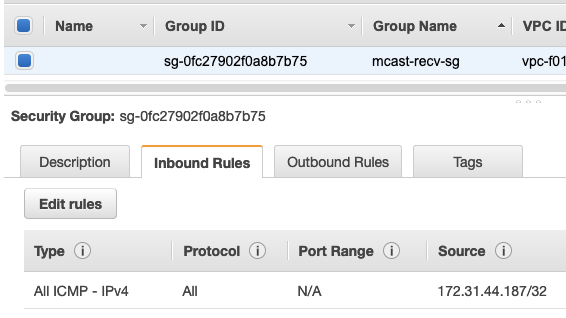

Just like unicast traffic, multicast traffic is subject to Security Groups (SGs). Even if the network is configured properly and everything is set to go, if the SGs aren't allowing the multicast traffic through, nothing is going to work.

For the receivers, they must be configured with inbound SG rules which permits traffic from the sender. This is much the same as allowing unicast traffic inbound.

The inbound SG rules must specify the IP address of the sender. Using an SG that the sender belongs to as the source is not supported and will not allow the multicast traffic through.

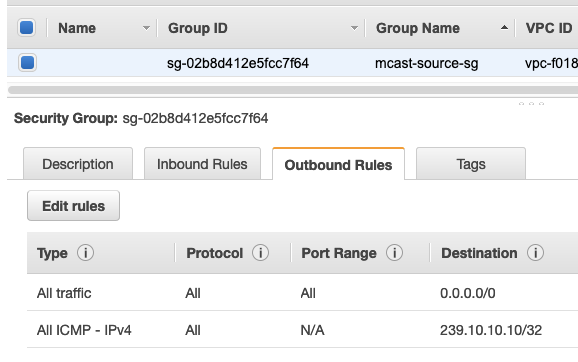

For the sender, the SG must be configured with an outbound rule that allows traffic to the multicast group IP address. By default this isn't an issue because SGs allow all outbound traffic, but I recommend you peek at the SG(s) on the sender anyways just to make sure. This SG has an explicit rule to allow the multicast traffic:

An interesting side effect of using ping to test multicast is that the receivers actually send a reply to the source; typically, multicast traffic just flows one way. To accomodate the reply, the SG on the source must also allow ICMP Echo Replies inbound.

Verification⌗

Now that everything is configured both in the network and on the sender and receivers, I can repeat the ping test from Ubuntu1.

ubuntu@ubuntu1:~$ ping -c3 239.10.10.10

PING 239.10.10.10 (239.10.10.10) 56(84) bytes of data.

64 bytes from 172.31.33.174: icmp_seq=1 ttl=64 time=0.523 ms

64 bytes from 10.0.0.70: icmp_seq=1 ttl=63 time=1.49 ms (DUP!)

64 bytes from 172.31.24.70: icmp_seq=1 ttl=255 time=1.90 ms (DUP!)

64 bytes from 172.31.33.174: icmp_seq=2 ttl=64 time=0.272 ms

64 bytes from 10.0.0.70: icmp_seq=2 ttl=63 time=1.10 ms (DUP!)

64 bytes from 172.31.24.70: icmp_seq=2 ttl=255 time=1.41 ms (DUP!)

64 bytes from 172.31.33.174: icmp_seq=3 ttl=64 time=0.245 ms

--- 239.10.10.10 ping statistics ---

3 packets transmitted, 3 received, +4 duplicates, 0% packet loss, time 2003ms

rtt min/avg/max/mdev = 0.245/0.994/1.906/0.608 ms

It's pretty obvious that this is an improvement over the earlier test 😁.

- I'm getting replies from all three receivers.

- I'm getting replies from a receiver in the same subnet as the sender, in a different subnet but same VPC, and from a different VPC. This covers all three attachment types in my network.

- The output is indicating that some of the replies are duplicates (

DUP!). That's because thepingcommand expects just a single reply to each ping request and it's flagging the additional replies as anomalies. In this case, multiple replies are expected.

Based on this output, everything is working nicely.

Wrap Up⌗

The configuraton to get multicast working is pretty straightforward. Just be aware of the constraints I've called out along the way. And if you're a network engineer, don't expect this multicast to work like multicast on-prem; this isn't your on-prem network 😉.

References⌗

- AWS Transit Gateway Multicast Overview (pay particular attention to the list of considerations)

- AWS Transit Gateway Documentation

- Security Groups for Your VPC

- IP Multicast Technology Overview (this is actually a deep dive on multicast from Cisco)

Disclaimer: The opinions and information expressed in this blog article are my own and not necessarily those of Amazon Web Services or Amazon, Inc.