Monitoring Direct Attached Storage Under ESXi

One of the first things I wanted to do with my ESXi lab box was to simulate a hard drive failure to see what alarms would be raised by ESXi. This exercise doesn't serve any purpose in the "real world" where ESXi hosts are likely to be using shared storage in all but the most esoteric of installations but since my lab box isn't using shared storage I wanted to make sure I understood the behavior of ESXi during a drive failure. This post is also a guide to my future self should a drive fail for real :-).

Scenario⌗

My home ESXi box has two drives in a mirror set connected to an LSI 9260-4i. These tests were all done with the default LSI CIM provider that comes with ESXi 4.1u1. I simulated a drive failure by pulling out one of the hot swap drive trays.

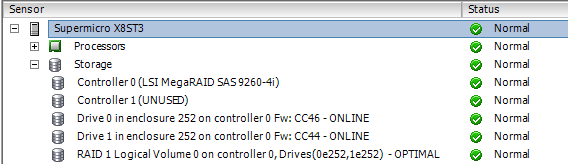

T=0: Everything Normal⌗

This is the view within the vSphere Client when there are no failures. Drive 0 and 1 on enclosure 252 (the LSI 9260-4i) are both ONLINE; the RAID 1 logical volume is OPTIMAL.

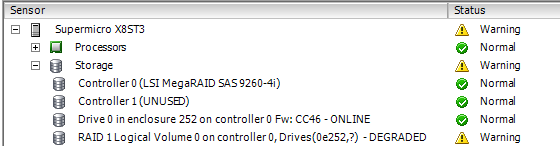

T=1: Drive Fails⌗

Here I've pulled a drive out of the enclosure.

Notice Drive 1 is no longer showing and that the RAID 1 logical volume is showing a DEGRADED state. Also notice how the yellow status indicator rolls up at each level so you can see there is a fault without even drilling down all the way.

T=2: Drive is Replaced⌗

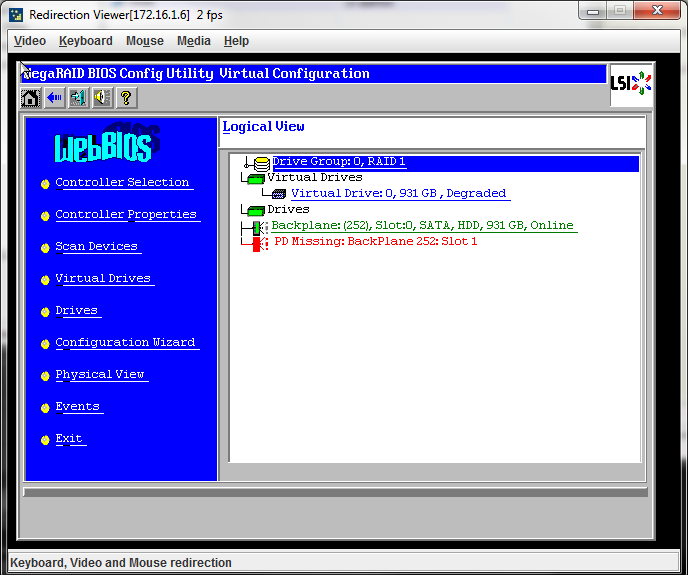

The drive has now been plugged back into the enclosure.

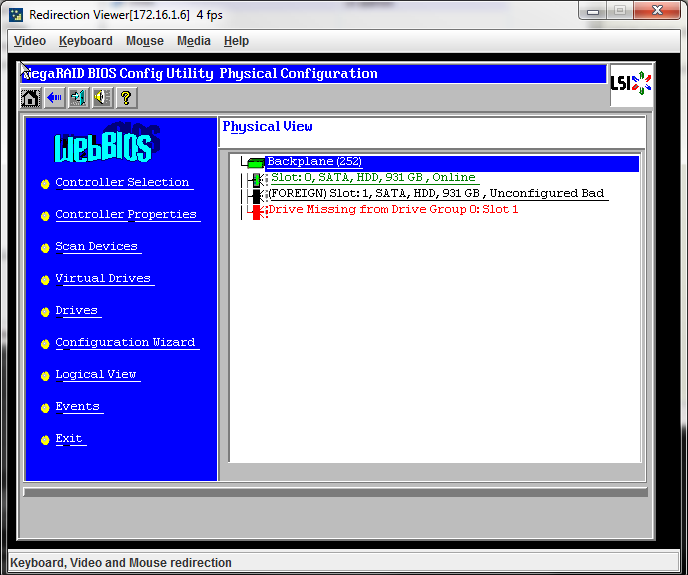

The LSI card does not automatically rebuild the mirror onto the newly replaced drive. The drive is put into the UNCONFIGURED BAD state and requires manual intervention to initiate a rebuild. With the LSI CIM provided by ESXi 4.1u1 there is no way to initiate an array rebuild (or do any array maintenance for that matter) so a reboot into the LSI BIOS is necessary.

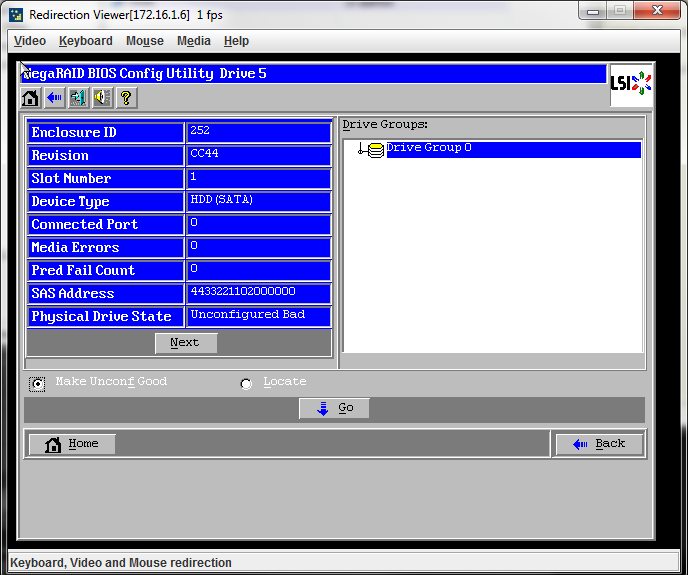

Even though the drive has been physically replaced, the BIOS shows that there is a "PD Missing" on backplane 252, slot 1. By switching to the "physical view" and selecting the drive that's shown as "Unconfigured Bad", the drive can be changed to "unconfigured good" by marking the radio button and clicking Go.

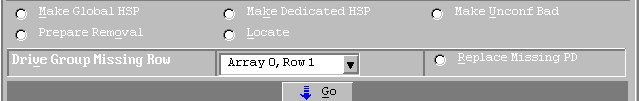

Now that the drive is in a "good" state, it can be added into the array by marking the radio button beside Replace Missing PD and hitting Go.

After that, choose to _Rebuild Drive_ and away you go.

Back in the vSphere Client, the host status now shows the drive in a REBUILD state and the RAID volume in a DEGRADED state. Once the rebuild is complete, everything goes green again.

Proactive Monitoring⌗

The vSphere Client has no capabilities for generating an alarm via email or SNMP when a hardware fault occurs. You have to fire up the client and inspect the hardware status manually or employ a tool that uses the API within ESXi to poll the hardware status and generate its own reports.