What the fex is a FEX anyways?

This is a quick, high level rundown of Cisco's various fabric extender technologies and where each fits into the data center.

Fabric Extender⌗

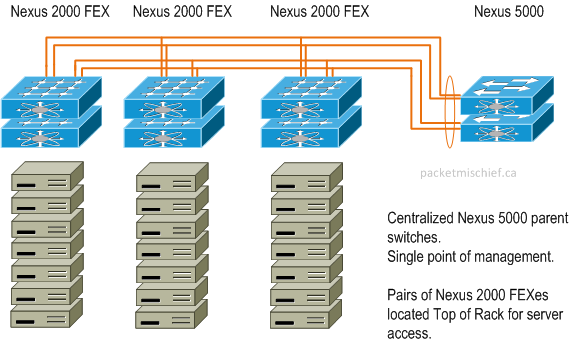

A Fabric Extender (FEX for short) is a companion to a Nexus 5000 or Nexus 7000 switch. The FEX, unlike a traditional switch, has no capability to store a forwarding table or run any control plane protocols. It relies on its parent 5000/7000 to perform those functions. As the name implies, the FEX "extends" the fabric (ie, the network) out towards the edge devices that require network connectivity. Some of the benefits of using FEXes are:

- They are fully managed as part of the parent switch and do not require independent software upgrades, config backups, or other maintenance tasks.

- They are able to leverage Virtual Port Channels (see 4 Types of Port Channels and When They're Used) for connecting to redundant parent switches thereby eliminating Spanning Tree and enabling active/active uplinks.

- They are more cost effective than an equivalent switch.

The analogy that's often used is to think of the FEX like a line card in a Catalyst 6500 (but think of a card with no ability to make forwarding decisions on its own, ie, no DFC) and the parent switch like the supervisor which manages the cards, runs the control and management planes, and also makes the data plane forwarding decisions. The difference being, instead of the FEX and its parent being inside the same sheet metal chassis, they are connected together with 10Gig transceivers and physically spread throughout the data center.

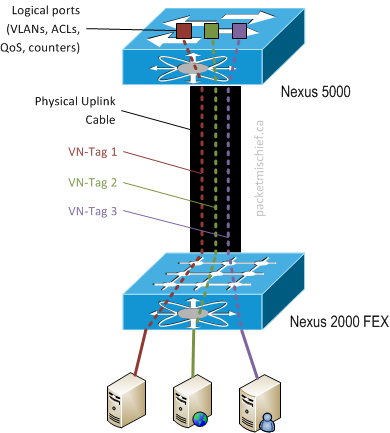

Ok, so if the FEX ports are all configured from the parent switch, how does the parent know which FEX port a frame came in on and how does the FEX know which port to egress a frame on?

Remember: a FEX has no local configuration. All port configs (ACLs, VLANs, QoS, etc) are applied and acted upon by the parent switch once the FEX sends an inbound frame to the parent. On the egress side, a FEX has no local forwarding table so when it receives a frame from the parent, it must be told which port to send the frame on.

Unlike the link between two traditional switches, the uplink from FEX to parent is not an 802.1Q trunk — traffic is not identified or isolated using VLAN tags. Rather, frames are marked with a VN-Tag that identifies the FEX port. VN-Tags are part of Cisco's pre-standard implementation of the 802.1BR Bridge Port Extension protocol.

When a FEX is uplinked to a parent, a unique tag ID is allocated for each host interface on the FEX. The parent and FEX mark each frame sent across the uplink with the appropriate tag. Logically, this actually allows the FEX host ports to show up on the parent switch and look, act, and feel just like physical ports on the parent. The VN-tag acts like a virtual wire that connects the host port directly to the parent.

Fabric Extenders are part of the Nexus lineup of products and are branded as the Nexus 2000 Series Fabric Extender.

Adapter FEX⌗

Adapter FEX extends the edge of the fabric right down into the server by putting the functionality of a FEX (as described above) onto a PCI card in the form of a 10Gbit NIC or Converged Network Adapter. Called a Virtual Interface Card (VIC), it is able to present multiple Ethernet interfaces and/or Fibre Channel HBAs on the PCI bus. To the operating system/hypervisor, it looks as though there are multiple physical cards installed in the system. The VIC exposes these vNICs and vHBAs to the network by associating their frames with a unique VN-Tag. Once again, the configuration of the virtual interfaces is performed on the parent switch.

The advantages of Adapter FEX include:

- Management of vNIC/vHBA profiles (ie, VLAN, ACL, QoS, etc) is performed in the network by the network team

- Reduced cabling between servers and the network through consolidation onto 10Gbit uplinks

- Reduced physical adapters in servers which reduces power consumption and still allows for following best practice guidance of multiple NICs to service the needs of the hypervisor

- Reduces consumption of switch ports for server uplinks

- VN-Tags are applied at the hardware level by the adapter making it extremely difficult for a software process to break out of its vNIC/vHBA

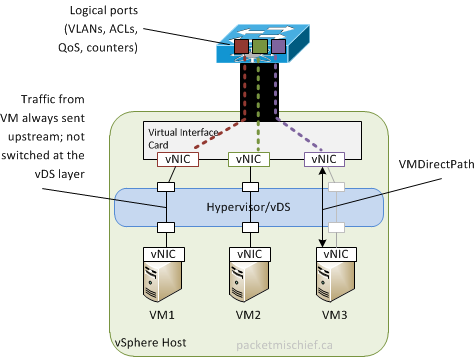

VM-FEX⌗

VM-FEX extends the idea of Adapter FEX and fully integrates it into the hypervisor (currently VMware vSphere, Red Hat KVM, and Hyper-V 3+). With VM-FEX, the virtual switch is eliminated as a point of management within the network. Each VM is given its own virtual NIC on the Virtual Interface Card (VIC) where frames received from the VM are marked on the uplink port with a VN-Tag and sent to the upstream switch for policy control and forwarding decisions. By utilizing features in the hypervisor such as VMDirectPath on vSphere, the virtual machines are able to directly access the PCI resources of the vNIC, bypassing the hypervisor. That, combined with the fact that all switching functions are performed in hardware on the upstream switch results in less CPU cycles on the host being taken up with processing packets.

With VM-FEX, the virtual and physical networks are merged and have a single point of management, policy control and traffic visibility.

- Network configuration (ACLs, VLANs, QoS, etc) is performed in the network by the network team

- Network configuration is done at a central point, eliminating multiple points of management

- Network visibility down to the VM's vNIC (byte counters, ACLs, QoS profiles, etc)

- Virtualization team doesn't have know about or attempt to configure VLANs, port groups, vSwitches, etc, they just pick a port profile from a drop down menu

With integration to VMware vCenter, vMotion events can be signaled to the network so that the associated logical port on the parent switch retains its "stickyness" to the virtual machine and the appropriate vNIC resources are automatically instantiated on the new host. Additionally, the VM vNIC profiles created by the network team on the parent switch are populated into vCenter which allows the virtualization team to select the network connectivity of a VM from a single drop down menu.

Disclaimer: The opinions and information expressed in this blog article are my own and not necessarily those of Cisco Systems.