4 Types of Port Channels and When They're Used

The other day I was catching up on recorded content from Cisco Live! and I saw mention of yet another implementation of port channels (this time called Enhanced Virtual Port Channels). I thought it would make a good blog entry to describe the differences of each, where they are used, and what platforms each is supported on.

The Plain Old Port Channel⌗

Back in the day, before all the different flavors of port channels came to be, there was the original port channel. This is also called an Etherchannel or a Link Aggregation Group (LAG) depending on what documentation or vendor you're dealing with. This port channel uses Link Aggregation and Control Protocol (LACP) or in the Cisco world, could also use Port Aggregation Protocol (PAgP) to signal the establishment of the channel between two devices. A port channel does a couple of things:

- Increases the available bandwidth between two devices.

- Creates one logical path out of multiple physical paths. Since Spanning Tree Protocol (STP) runs on the logical link and not the physical links, all of the physical links can be in a forwarding state.

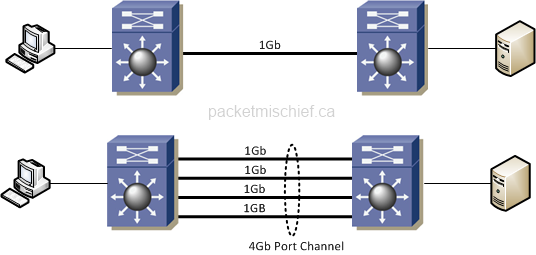

Now, with respect to #1, it's important to differentiate between bandwidth and throughput. If we have two switches connected together with (1) 1Gb link, our bandwidth is 1Gb and so is our maximum throughput. If we cable up more links so that we have (4) 1Gb links and put them into a port channel, our bandwidth is now 4Gb but our max throughput is still 1Gb. Why is that?

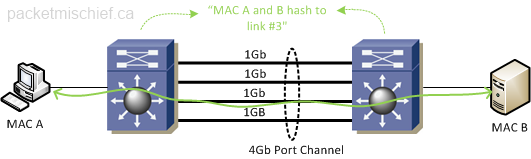

When a switch is sending frames across a port channel it has to use some sort of algorithm to determine which physical link to send the frames on. The algorithm ensures a distribution of traffic across all the links. Now in order to ensure in-order frame delivery and not cause an increase in jitter, etc, it's general practice to put all frames from the same sender or between the same sender/receiver pair on the same link.

Since all frames between sender and receiver will always be on the same 1Gb link, that results in a maximum throughput of only 1Gb.

Plain old port channels can be used between most Ethernet switches and many server NIC drivers support LACP as well for providing a port channel between a server and its upstream switch. Note that with plain port channels, each physical link that makes up one end of the port channel must terminate on the same switch. You cannot, for example, have a port channel from a server to two upstream switches. There is a type of port channel that does support this called Multi-Chassis Etherchannel (MEC) or Multi-Chassis Link Aggregation Group (MLAG). When a technology such as Cisco's Stackwise or Virtual Switching System (VSS) is employed in the network, it's possible to form a MEC between a single device (like a server) and two distinct upstream switches, as long as the switches are participating in the same Stackwise stack or VSS.

Virtual Port Channel⌗

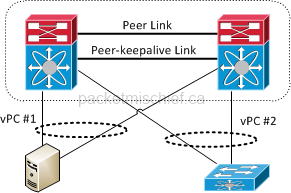

A Virtual Port Channel (vPC) is an enhancement to MEC that allows for the same sort of functionality — building a port channel across two switches — without the need for Stackwise or VSS.

Both Stackwise and VSS go far beyond just port channels: They actually combine the control and management planes of the member switches effectively turning them into one logical entity. This doesn't sound too bad when you consider you've gone from (2) touch points down to just (1) but there can be some increased risk at the same time. Most notably you've now got a single instance of your control protocol processes (STP, OSPF, BGP, DTP, LACP, etc) running on the (logical) switch. A software bug or protocol error will cause the protocol to hiccup across the whole stack/VSS. Next, since the management plane is also combined, it means there's one common configuration file. A fat finger of a config line or a change control gone bad could have serious consequences since it will affect both physical switches in the stack/VSS.

Now having said all that, it really highlights the benefits of vPC: the control and management planes remain separate! It's really the best of both words. You can build a cross-chassis port channel without taking on the risk of combining the control and management plane.

Note the special links connecting the two vPC member switches together. They are used to carry control, configuration, and keepalive data between the two. As shown, since a vPC uses LACP for signalling (it could also use no signalling and just be manually configured), a virtual port channel can connect to regular Ethernet switches as well as servers that support regular port channels.

vPC is currently available on (as of July, 2015):

- Nexus 7700 and 7000

- Nexus 5000, 5500, and 5600 series switches

- Nexus 9500 and 9300 series switches running in standalone NX-OS mode

- Check the software release notes on the Nexus 9k switches for any caveats or restrictions with respect to vPC. Don't assume that vPC on the 9k has feature parity with vPC on the 7k or 5k.

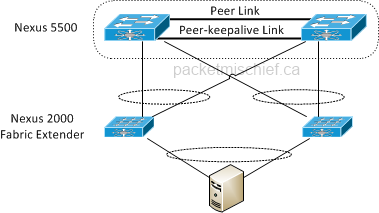

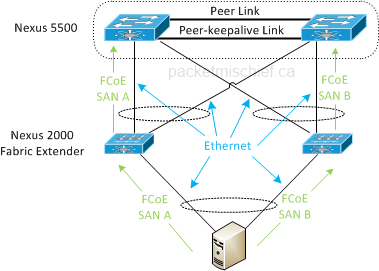

There is a major topology restriction with vPC when using the Nexus 2000 series Fabric Extender (FEX) in conjunction with the 5×00: you cannot configure a dual-layer vPC as shown in the diagram below.

"Dual layer" is a reference to the vPC configured between the 5500 and the FEXes and then from the FEXes to the end device. With vPC, you have to do one or the other. That said, if you read the EvPC section below you'll see that there is a way to run this topology successfully.

Virtual Port Channel Plus⌗

Where vPC was an enhancement to MEC, vPC+ is, naturally, an enhancement to vPC.

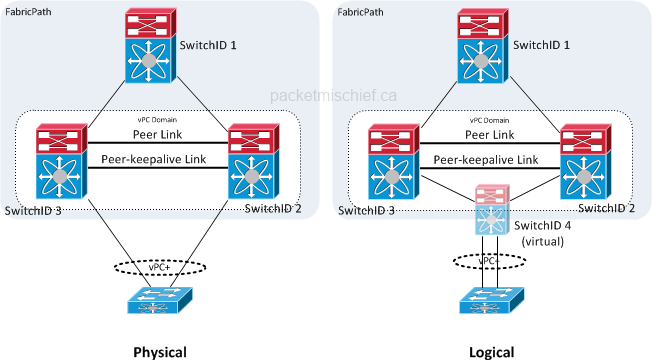

vPC+ is used in a FabricPath domain where a virtual port channel is configured from fabric-member switches towards some non-fabric device such as a server or what's termed a "classic" Ethernet (CE) switch. vPC+ allows a non-fabric device to connect to two fabric switches for redundancy and multi-pathing purposes and also allows traffic to be load balanced shared on egress of the fabric towards the non fabric device.

The picture shows the physical and logical representations of a vPC+ connection. Physically, the CE switch is cabled to two independent FabricPath switches. Those two switches are in a vPC domain. The magic of vPC+ is that the member switches logically instantiate a virtual switch (SwitchID 4) which sits between them and the CE switch. This logical switch is advertised to the rest of the FabricPath network as being the edge switch where the CE switch is connected. This is the key to enabling load balancing of traffic egressing the fabric towards the CE switch. In the sample network above, in order to reach the CE switch, Switch 1 will address its FabricPath frames to Switch 4 for which it has (2) equal-cost paths. If the logical topology mirrored the physical topology, Switch 1 would have to address its frames to either Switch 2 or Switch 3 (but not both because the FabricPath forwarding table can only hold a single switch ID for a given destination) which would result in no load balancing.

Enhanced Virtual Port Channel⌗

Another spin on vPC! Ok, this one addresses the limitation explained in the vPC section above where you cannot have a dual-layer vPC between Nexus 5500s and the FEXes and between the FEXes and the end device. EvPC allows that exact topology. In addition, EvPC also maintains SAN A/B separation even though there is a full mesh of connectivity.

Between the Nexus 5500s and the FEXes, the FCoE storage traffic maintains that traditional "air gap" between the A and B sides and never crosses onto a common network element. This is accomplished through explicit configuration on the 5500s which tells the left FEX to only send FCoE traffic to the left 5500 and right FEX only to the right 5500. The regular Ethernet traffic can traverse any of the links anywhere in the topology.

The reason for the difference between vPC and EvPC is due to limitations in the Nexus 5000 platform (ie the 5010 and 5020) that prevent it from supporting EvPC. The Nexus 5500 platform supports EvPC and does not require any specific FEX model to make it work.

Update July 23 2015: The Nexus 5600 also supports EvPC.

References⌗

- Configuring Port Channels on Nexus 5500

- Configuring Virtual Port Channels on Nexus 5500

- Configuring Enhanced Virtual Port Channels on Nexus 5500

- Configuring Enhanced Virtual Port Channels on Nexus 5600

Disclaimer: The opinions and information expressed in this blog article are my own and not necessarily those of Cisco Systems.